Paul von Hippel wrote:

Stuart Buck noticed your recent post western method for science. This is something Stuart and I started talking about last year, and Stuart, who is a trained lawyer, believes it was first suggested to him by a law professor about 15 years ago.

Since the 19th century, the legal profession has used citation indexes that do more than just count citations and match keywords. Resources like Shepard's Citations, first printed in 1873 and now available online with competing tools such as his JustCite, KeyCite, BCite, and SmartCite, do more than just find relevant case law and statutes. These tell lawyers whether a case or law is still „good law.“ The Legal Citation Index shows lawyers which cases have been cited in the affirmative or affirmative, and which cases have been criticized, reversed, or dismissed by subsequent courts.

Although Shepard's Citations inspired the first Science Citation Index in 1960 and later tools such as Google Scholar, today's academic search engines still rely primarily on citation counts and keywords. As a result, many scientists are like lawyers who walk into court without knowing that the case at the center of their argument has been dismissed.

Kind of, but not completely. The key difference is that in court, there is a reasonable chance that the opposing attorney or judge will find that an important case has been dismissed, in which case your argument relying on that case will fail. . There is a clear incentive not to rely on a dismissed case. But in science, there are no opposing lawyers or judges. You can build an entire career on research that can't be replicated, and as long as you don't do anything wrong, that's totally fine. Really That's ridiculous stunt.

Hippel continues:

Here are some related articles published recently.

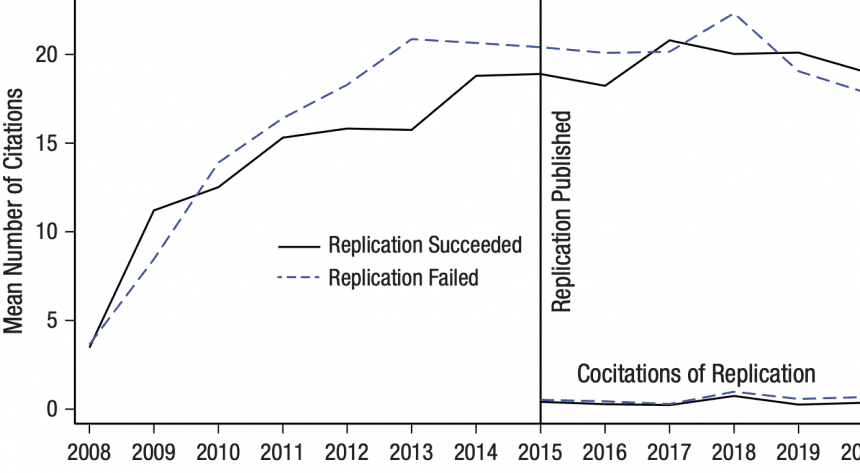

One is“Does psychology self-correct?report that whether replication studies are successful or unsuccessful, it is unlikely to have a significant impact on the citations of the replicated studies. If a finding cannot be replicated, most influential studies continue to collect citations at the same rate year after year, as if no replication attempt had been made. This problem is not limited to psychology, but raises serious questions about how quickly the scientific community will fix it and whether replication studies are having the corrective effect we hope for. . I considered several possible reasons for the persistence of impact on studies that could not be replicated and concluded that academic search engines like Google Scholar may be part of the problem. This is because Google Scholar favors highly cited papers, regardless of whether they are reproducible, perpetuating the impact of questionable findings. .

The finding that duplication does not affect citations itself is fairly well replicated.a Recent blog posts Written by Bob Reid of the University of Canterbury in New Zealand, this is a summary of five recent papers in psychology, economics, and Nature/Science publications that have shown more or less the same thing.

In a second article published last week in Nature Human Behavior, Stuart Buck and I suggest: Improving academic search engines to reduce scholar bias. We propose that the next generation of academic search engines should go beyond just counting citations to helping scholars assess the rigor and trustworthiness of their research. We also suggest that future engines should be transparent, responsive, and open source.

This seems like a reasonable suggestion. The good news is that their hypothetical new search engine doesn't have to dominate or replace existing products. People can use Google Scholar to find the most cited papers and use this new one to get information about rigor and trustworthiness.a nudge You might say you're on the right track.